A quantum neural network can see optical illusions like humans do. Could it be the future of AI?

Master1305 / Shutterstock

Optical illusions, quantum mechanics and neural networks might seem to be quite unrelated topics at first glance. However, in new research I have used a phenomenon called “quantum tunnelling” to design a neural network that can “see” optical illusions in much the same way humans do.

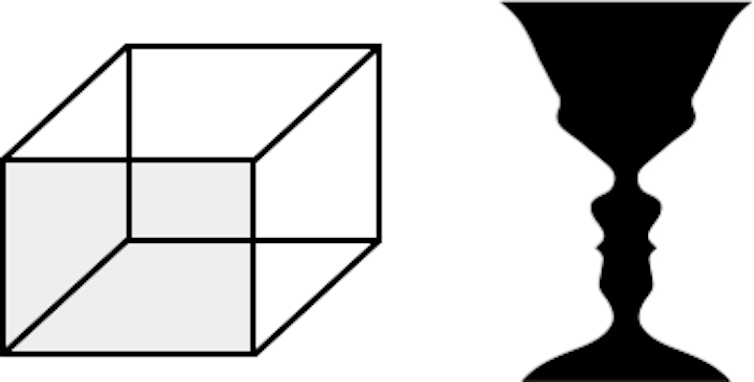

My neural network did well at simulating human perception of the famous Necker cube and Rubin’s vase illusions – and in fact better than some much larger conventional neural networks used in computer vision.

This work may also shed light on the question of whether artificial intelligence (AI) systems can ever truly achieve something like human cognition.

The Necker cube (left) and Rubin’s vase (right). Each has two interpretations. Is the shaded face of the cube at the back or the front? And do you see two people looking towards each other or a vase?

Ivan Maksymov

Why optical illusions?

Optical illusions trick our brains into seeing things which may or may not be real. We do not fully understand how optical illusions work, but studying them can teach us about how our brains work and how they sometimes fail, in cases such as dementia and on long space flights.

Researchers using AI to emulate and study human vision have found optical illusions pose a problem. While computer vision systems can recognise complex objects such as art paintings, they often cannot understand optical illusions. (The latest models appear to recognise at least some kinds of illusions, but these results require further investigation.)

My research addresses this problem with the use of quantum physics.

How does my neural network work?

When a human brain processes information, it decides which data are helpful and which are not. A neural network imitates the function of the brain using many layers of artificial neurons that enable it to store and classify data as useful or not beneficial.

Neurons are activated by signals from their neighbours. Imagine each neuron has to climb over a brick wall to be switched on, and signals from neighbours bump it higher and higher, until eventually it gets over the top and reaches the activation point on the other side.

In quantum mechanics, tiny objects like electrons can sometimes pass through apparently impenetrable barriers via an effect called “quantum tunnelling”. In my neural network, quantum tunnelling lets neurons sometimes jump straight through the brick wall to the activation point and switch on even when they “shouldn’t”.

Why quantum tunnelling?

The discovery of quantum tunnelling in the early decades of the 20th century allowed scientists to explain natural phenomena such as radioactive decay that seemed impossible according to classical physics.

In the 21st century, scientists face a similar problem. Existing theories fall short of explaining human perception, behaviour and decision-making.

Research has shown that tools from quantum mechanics may help explain human behaviour and decision-making.

While some have suggested that quantum effects play an important role in our brains, even if they don’t we may still find the laws of quantum mechanics useful to model human thinking. For example, quantum computational algorithms are more efficient than classical algorithms for many tasks.

With this in mind, I wanted to find out what happened if I injected quantum effects into the workings of a neural network.

So, how does the quantum tunnelling network perform?

When we see an optical illusion with two possible interpretations (like the ambiguous cube or the vase and faces), researchers believe we temporarily hold both interpretations at the same time, until our brains decide which picture should be seen.

This situation resembles the quantum-mechanical thought experiment of Schrödinger’s cat. This famous scenario describes a cat in a box whose life depends on the decay of a quantum particle. According to quantum mechanics, the particle can be in two different states at the same time until we observe it – and so the cat can likewise simultaneously be alive and dead.

I trained my quantum-tunnelling neural network to recognise the Necker cube and Rubin’s vase illusions. When faced with the illusion as an input, it produced an output of one or the other of the two interpretations.

Over time, which interpretation it chose oscillated back and forth. Traditional neural networks also produce this behaviour, but in addition my network produced some ambiguous results hovering between the two certain outputs – much like our own brains can hold both interpretations together before settling on one.

What now?

In an era of deepfakes and fake news, understanding how our brains process illusions and build models of reality has never been more important.

In other research, I am exploring how quantum effects may also help us understand social behaviour and radicalisation of opinions in social networks.

In the long term, quantum-powered AI may eventually contribute to the development of conscious robots. But for now, my research work continues.

![]()

Ivan Maksymov does not work for, consult, own shares in or receive funding from any company or organisation that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.